I just got the excellent book Applied Predictive Modeling, by Max Kuhn and Kjell Johnson [1]. The book is designed for a broad audience and focus on the construction and application of predictive models. Besides going through the necessary theory in a not-so-technical way, the book provides R code at the end of each chapter. This enables the reader to replicate the techniques described in the book, which is nice. Most of such techniques can be applied through calls to functions from the caret package, which is a very convenient package to have around when doing predictive modeling.

Chapter 3 is about unsupervised techniques for pre-processing your data. The pre-processing step happens before you start building your model. Inadequate data pre-processing is pointed on the book as one of the common reasons on why some predictive models fail. Unsupervised means that the transformations you perform on your predictors (covariates) does not use information about the response variable.

Feature engineering

How your predictors are encoded can have a significant impact on model performance. For example, the ratio of two predictors may be more effective than using two independent predictors. This will depend on the model used as well as on the particularities of the phenomenon you want to predict. The manufacturing of predictors to improve prediction performance is called feature engineering. To succeed at this stage you should have a deep understanding of the problem you are trying to model.

Data transformations for individuals predictors

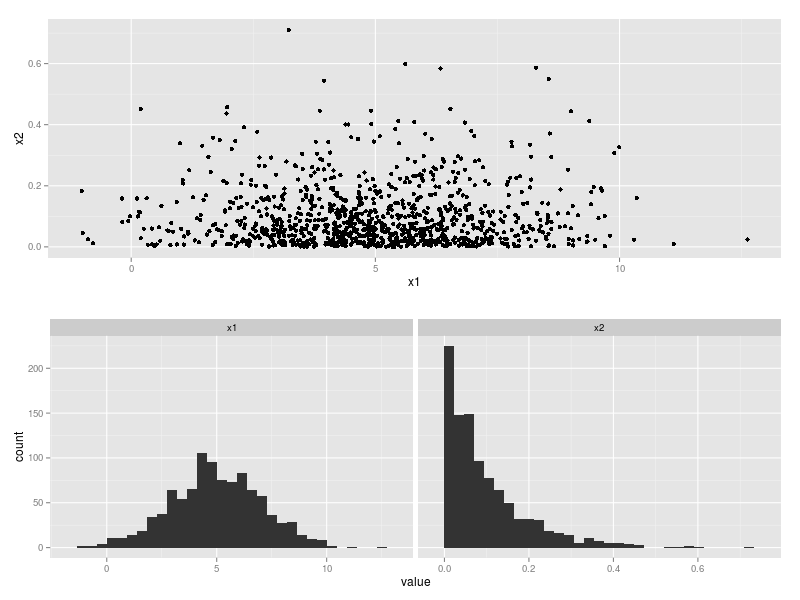

A good practice is to center, scale and apply skewness transformations for each of the individual predictors. This practice gives more stability for numerical algorithms used later in the fitting of different models as well as improve the predictive ability of some models. Box and Cox transformation [2], centering and scaling can be applied using the preProcess function from caret. Assume we have a predictors data frame with two predictors, x1 and x2, depicted in Figure 1.

Then the following code

set.seed(1)

predictors = data.frame(x1 = rnorm(1000,

mean = 5,

sd = 2),

x2 = rexp(1000,

rate=10))

require(caret)

trans = preProcess(predictors,

c("BoxCox", "center", "scale"))

predictorsTrans = data.frame(

trans = predict(trans, predictors))

will estimate the of the Box and Cox transformation

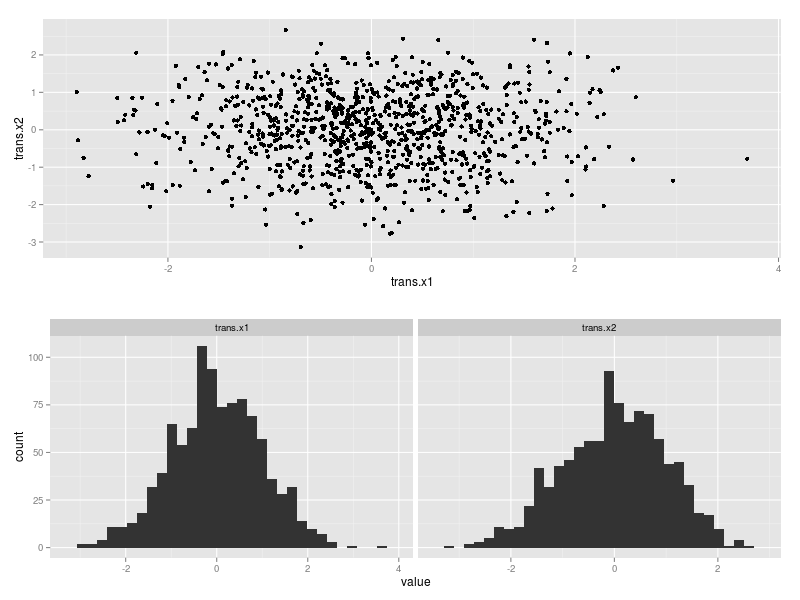

apply it to your predictors that take on positive values, and then center and scale each one of the predictors. The new data frame predictorsTrans with the transformed predictors is depicted in Figure 2.

I will write more about the book and the caret package at future posts. The complete code that I have used here to simulate the data, generate the pictures and transform the data can be found on gist.

References:

[1] Kuhn, M. and Johnson, K. (2013). Applied Predictive Modeling. Springer.

[2] Box, G. and Cox, D. (1964). An analysis of transformations. Journal of the Royal Statistical Society. Series B (Methodological) 211-252